Background

From time to time the following error occurs in DeltaShell:

SQL logic error or missing database at most 64 tables in a join

This indicates that Nhibernate is attempting to fetch data from too many different tables at the same time. Note that it doesn't matter at all if there is any data in those tables, all that matters is how big the biggest class hierarchy is.

That hierarchy usually grows if you have many plugins loaded, for example many plugins have ProjectItems, so the ProjectItem hierarchy can get pretty big! Nhibernate by default attempts to load all objects at once, but as the data is spread over many tables it uses SQL JOINs to do so. This is fine, up to the point where it gets above (or equal) to 64 joins, as that is the limit of the database driver: SqlLite.

To prevent Nhibernate from joining so many tables together, you have to add instructions in the mapping files to prevent this. Unfortunately there doesn't seem to be a simple permanent fix, and instead as the relationships change and grow, this problem resurfaces every few months, sometimes hidden and sometimes as a big exception whenever you try to load any dsproj. Do note however that in production environments this issue is much less likely to occur, as usually only a few plugins are shipped in a single install.

Solution

There seem to be two options to prevent these excessive joins:

- Make relationships lazy (not recommended -> can introduce problems with code not compatible with laziness)

- Set (non-lazy) relationships to fetch=select, Example:

<many-to-one name="CalculationSelection"

lazy="false"

fetch="select"

cascade="all-delete-orphan"

class="DeltaShell.Plugins.MorphAn.Models.CalculationSelection"

column="calculation_selection_id"/>

Recommended workflow:

- Turn 'just my code' off just prior to opening an offending dsproj.

- Determine the extend of the problem: copy the sql query causing the exception (part of command object) into a text editor

- Count the number of occurrences of the string 'JOIN' in the file (as a baseline) -> typically between 64 and 100

- Now the hard part: scan through that query and identify plugins / classes that seem to be occurring a lot, or combinations you wouldn't expect

- Apply fetch=select on one or more (non-lazy!) relationships where you think it would reduce the number of joins (see rev #21942 as an example)

- Reload the project; if the exception still occurs check if the number of JOIN has actually lessened (using text editor)

- If not, revert, if it has, find another relationship to change.

- Continue until no exception (preferably a bit longer: eg <40 joins!)

http://www.codeproject.com/Articles/271598/Application-DoEvents-in-WPF

[SecurityPermissionAttribute(SecurityAction.Demand, Flags = SecurityPermissionFlag.UnmanagedCode)]

public void DoEvents()

{

DispatcherFrame frame = new DispatcherFrame();

Dispatcher.CurrentDispatcher.BeginInvoke(DispatcherPriority.Background,

new DispatcherOperationCallback(ExitFrame), frame);

Dispatcher.PushFrame(frame);

}

public object ExitFrame(object f)

{

((DispatcherFrame)f).Continue = false;

return null;

}

- Switch to .NET 4.5 / PostSharp 2.1 / IronPython 2.7 + NumPy/SciPy

- Separate Delta Shell and Plugins (delete *ALL* direct dependencies between plugins) and use NuGet to manage / develop / deploy Delta Shell and plugins

- IEditableObject, EditAction, undo/redo - document, review and simplify. Nested editable objects do not work nice, implement EditableObject attribute, use it consistently with EditAction

- Delta Shell Developer Guide (wiki)

- Create conceptual diagram showing all important components of the Delta Shell / SOBEK

- List and short description of all existing components

- Extend CollectionChange to multiple items

- Remove dependency on IProjectItem in IModel and IDataItem, IActivity: make it a composition and two separate contexts: (Project + Folder + ProjectItem) - (IActivity - Model - ...)

- Extend DataItems to exchange values *only* when it is asked, this is a minimal requirement for parallel (asynchroneous) model runs.

- Bubbling of CollectionChange and PropertyChange must happen automatically in ModelBase, derived models, Functions

- ModelBase OnInputCollectionChange and OnInputPropertyChange etc should work on ALL aggregated and composed items of the model

- MemberwiseClone results in broken objects since it copies ~InstanceCreadentials, find out what to do.

- Geospatial

- Review map editing functionality including topology, interaction, rendering

- Create new map layers using ILayerFactory (introduce it!)

- Eliminate need for custom map tools such as HydroNetworkEditorMapTool

- Move common controls (such as Map Contents, Layer Properties Dialog, Attribute Editor) to SharpMap.UI

- Remove dependencies on IGui from all views

- Clone / CopyFrom, ICloneable / IDeepCloneable / ICopyFrom - clean-up all this mess ... maybe solve it at framework level (clone all properties based on Composition / Aggregation).

- Decide what to copy during copy/paste or other action, use one consistent way for *all* schematization items (e.g. skip / generate Name, Id, HydroId)

- Solve problem with multiple inheritance mapping (NHibernate). Option 1: prevent multiple inheritance at all (use composition). Option 2: implement custom NH type MultipleInhericanceType<TSource, TTarget> and use it during mapping.

- Replace all occurances of ((INotifyPropertyChange)value).PropertyChanged += OnSomethingPropertyChanged; by some nicer code. Think about Aspect on property, Observable<...> or other handy utility applied globally.

- Automatycally set all inverse properties on property set / collection add (e.g. BranchFeature.Branch, Branch.Network ...)

- Merge most of the feature property classes into feature classes, use configured EditActions where necessary for business logic.

- Improve Drag & Drop API

- Improve TreeView API

- In TreeViewNodePresenterBase::OnCollectionChanged it tries to do 'treeView.Nodes.Remove(node)'; that rarely works!! Should remove from node.Parent.Nodes?

- Improve TableView API

- add/remove columns is a mess (see ITableView / TableView)

- Improve provider logic (finding the best fitting view, properties, ContextMenu, NodePresenter etc. for a type)

- Fix working with units

- Extend views to support multiple items (via view context as an option)

- Improve handling of ViewContexs

- Improve view creation / resolving logic (double-click on item opens view for parent>parent>parent object but can also open view for this object), currently it is too implicit (CreateView changes view.Data to a different object and Delta Shell should check it)

- Clean-up database and remove duplicate classes (Delta Shell + SOBEK 3.1 plugins)

- Analyze and remove redundant events (using event tree)

- TreeView

- MapControl

- Replace all Timers by DelayedEventHandler and fine-tune them

- Review and fix all WorkInProgress tests

- Review / fix all HACKs in Delta Shell + SOBEK plugins

- Remove code dependencies between plugins, keep them loosely coupled.

- Fews plugin should not know anything about specific plugin of Flow1D, RTC

- Flow1D should not know about RR, RR should not know about Flow1D, ...

- Make them talk via API (Delta Shell, Hydro, etc.)

- What to do with forms used overall (MapView, CoverageView ...), pull-up?

- Tests

- Improve conventions for integration tests (they must be easy to find, should not be mixed with unit tests)

- Minimize dependencies in integration tests

- Improve existing code (eliminate HACKS)

- Water Flow Model 1D Boundary / Lateral Data

- Review RR schematiaztion and model classes

- Review WAQ classes

- Separate NetworkEditor and HydroNetworkEditor

- Introduce support for file-based models at framework level

- Use per-model directory in project data folder

- Rely on IFileBased for all file-based model items

- Keep model data directory in-sync with Project

- GetAllItemsRecursive

- Use object tree + [Aggregation] instead of custom implementation

- Minimize number of GetAllItemsRecursive calls

- Go to most recent / stable version of 3rd party libraries:

- Gdal

- NetCdf

- IKVM

- etc..

- Take into account class-hierarchy when searching for nodepresenters or property classes

- Use providers for Importers/Exporters similar to views and nodepresenters (what about property classes?)

- Implement something like SynchronizedEventedList which can keep other list in synch with original list and take build/destroy methods, see e.g. HydroNetworkRoutesLayerController, DataItemSet, ...

- Integrate Netron 2.5 + Cobalt into trunk (Swf.Controls)

- Separate data hierarchy/organisation from data-application. Hierarchy/organisation should be responsibility of IProjectItem (Parent, Children, etc...)

We have a modified MSBuild Profiler checked in under build/tools. In case you're interested in the build statistics, here is a screenshot of a clean build for the Delta Shell Sobek 3.0 branch. You can profile a build yourself by running the msbuild-profiler.cmd batch file.

It looks like recommended way to compare collections in new NUnit is:

int[] iarray = new int[] { 1, 2, 3 };

string[] sarray = new string[] { "a", "b", "c" };

Assert.That( new string[] { "c", "a", "b" }, Is.EquivalentTo( sarray ) );

Assert.That( new int[] { 1, 2, 2 }, Is.Not.EquivalentTo( iarray ) );

This will make sure that you get:

Expected: equivalent to < 1.0d, 1.0d, 2.0d, 1.0d, 2.0d, 2.0d > But was: < -999.0d, -999.0d, -999.0d, -999.0d, -999.0d, -999.0d >

instead of:

Assert failed, objects are not equal

Background

Event leaks are a big pain in DeltaShell (or any application). With an event leak I mean when a listener (typically IView, BindingList) listens to an object it should no longer know/care about (Data).

One important convention with regard to unregistering/disposing resources in DeltaSehll is the logic around IView.Data.

When the view opens, its data is set:

IView.Data = dataObject; //view registers to dataObject

The view registers to the dataObject and presents it in the view. When the view is closed by the user, the Data is set to null, which tells the View it should unregister from the previous Data:

IView.Data = null; //view must unregister from previous dataObject

If a view forgets to dispose/unregister from all events, we have an event leak. And these event leaks typically cause memory leaks, crashes and performance degradation (see example).

Example

Suppose a FunctionView does not unregister from a function at all when the view is closed. If the function changes, the event listeners in the closed view will be called. The garbage collector notices the dependency and will keep the view available in memory (memory leak). The event listeners code may update the closed / data-less and potentially disposed view: good chance of crashes. And finally the code in the event listener may trigger heavy updates, like updating the chart axis on every value added to the function (performance degradations).

Step 1 - Don't create them

Hopefully dangers of event leaks are clear now, so what can we do about them? Unfortunately it's very easy to create event leaks, but most views in DeltaShell follow this useful pattern which helps to prevent mistakes:

public void Data

{

get { return data;}

set

{

if (data != null)

{

Unsubscribe(data); //unregister from any old data

}

data = value; //set the data

if (data != null)

{

Subscribe(data); //register to new data

}

}

}

This keeps things clean (as long as Unsubscribe and Subscribe are in sync). Some views however create intermediate objects, such as (Function)BindingLists, which complicate things. As a rule of thumb, each time you do '+=' or 'new FunctionBindingList' (or similar object), you should have a '-=' and a 'Dispose' to match it. Same goes for DelayedEventHandlers etc. Check your disposables!

Composite views must be careful to also set their children's Data to null when their own Data is set to null.

Step 2 - Detect & alert

So how do you know if your view is without event leaks? Although not a guaranteed approach, there is a test helper which can be of use. You can use this test helper to debug and detect any existing leaks, and also use it as a shotgun-test to alert you if someone introduces a event leak afterwards.

To use the helper (TestReferenceHelper), the pattern is typically as follows:

[Test]

public void ViewShouldUnregisterFromData()

{

var testObject = new TestClass(); //your data object

var view = new MyView(); //your view

int numSubscriptionsBefore = TestReferenceHelper.FindEventSubscriptions(testObject);

view.Data = testObject; //at this point numSubscriptions is typically higher: the view is registered to events on the data

view.Data = null; //at this point the view should have unsubscribed again..

int numSubscriptionsAfter = TestReferenceHelper.FindEventSubscriptions(testObject);

//assert no leaks:

Assert.AreEqual(numSubscriptionsBefore, numSubscriptionsAfter); //if the view did its job, these should be equal

}

The method searches the object and its properties (up to some depth) for event subscriptions. There are some overloads on the method that allow you to see the actual subscriptions for debugging purposes. That output looks a bit like this:

{PostSharp}.PropertyChanging

{PostSharp}.PropertyChanged

.Components.CollectionChanged

.Components.PropertyChanged

.Components.CollectionChanged (x4)

.Components.CollectionChanging (x4)

//etc..

You can place this output from before and after in a file comparer to quickly find the leaked events (there's no build-in difference viewer, so that's manual work).

That's it. But while you're at it, you may want to check out another method in TestReferenceHelper: SearchObjectInObjectGraph(target,graph). Check out the usages of this method so see how it can help you to test/debug your Clone/DeepClone methods.

If there are any questions / suggestions please let me know!

Consider the following mapping:

<class name="SharpMap.Layers.GroupLayer, SharpMap" table="grouplayer">

<list name="Layers" lazy="false" cascade="all" collection- type="DeltaShell.Plugins.Data.NHibernate.Collections.Generic.PersistentEventedListType`1[[SharpMap.Layers.ILayer, SharpMap]], DeltaShell.Plugins.Data.NHibernate">

<key column="parent_layer_id"/>

<index column="list_index" />

<one-to-many class="SharpMap.Layers.ILayer, SharpMap"/>

</list>

</class>

<class name="Map" table="map" lazy="false" >

<id name="Id" column="id" type="Int64">

<generator class="native" />

</id>

<list name="Layers" lazy="false" cascade="all-delete-orphan" collection-type="DeltaShell.Plugins.Data.NHibernate.Collections.Generic.PersistentEventedListType`1[[SharpMap.Layers.ILayer, SharpMap]], DeltaShell.Plugins.Data.NHibernate">

<key column="map_id"/>

<index column="list_index" />

<one-to-many class="SharpMap.Layers.ILayer, SharpMap"/>

</list>

</class>

As you can see both grouplayer and Map have a list of layers (ILayer) when are mapped in a <list> element. This will result in a 'schema' like this:

Since a layer is in not the collection of map layers and in a grouplayer at the same time the list_index could be shared right??? Unfortunately this is not the case. Consider the following:

1 Add a map

2 Add a grouplayer to the map

3 To that grouplayer add another layer

4 Save / Load

5 Now move the layer of 3 out of the grouplayer into the map of 1.

6 Save / Load

7 An expcetion will occur: something like 'null index column for collection: SharpMap.Map.Layers'

Now what is happening? During the save of 6 we see the following sql (with NHibernate Profiler)

UPDATE layer

SET parent_layer_id = null,

list_index = null

WHERE parent_layer_id = 2 /* @p0 */

AND id = 6 /* @p1 */

This is were NHibernate is removing the layer out of the grouplayer. NHibernate sets the list_index to null and therefore messes up Maps->Layers relationship. As NHibernate considers the relationships unrelated this is should be valid.

So....for every relationship use a separate index column. It is a good convention to use the same prefix as used for the key column. The mapping of grouplayer then looks like this:

<class name="SharpMap.Layers.GroupLayer, SharpMap" table="grouplayer">

<list name="Layers" lazy="false" cascade="all" collection- type="DeltaShell.Plugins.Data.NHibernate.Collections.Generic.PersistentEventedListType`1[[SharpMap.Layers.ILayer, SharpMap]], DeltaShell.Plugins.Data.NHibernate">

<key column="parent_layer_id"/>

<index column="parent_layer_list_index" />

<one-to-many class="SharpMap.Layers.ILayer, SharpMap"/>

</list>

</class>

Whenever you put an object in a DataItem, NHibernate figures out how to save this 'Value' entity using what is called an Any mapping. As part of this Any mapping mechanism, NHibernate stores the (CLR, .NET) type of the object in a column in the database as fully qualified string. Also sometimes we map types directly, for example ValueType of a dataitem.

This is all fine, until you rename a class..

When NHibernate tries to convert the string back into a .NET type, it will fail and crash. Legacy mappings won't help you there.

To solve this issue, we have added a custom HBM (meta)attribute in DeltaShell: oldClassName. The value of this attribute is used as an alias when resolving types. This happens automagically for the any mapping of dataitem, but if you have mapped a Type column/property directly, make sure to load it using TypeUserType in your legacy mapping.

Example:

Your old class 'Branch' has been renamed to 'Channel'. Now in your legacy mapping you do the following:

<class name="Channel" table="branch" lazy="false">

<meta attribute="oldClassName">Domain.Branch, Domain</meta>

<id name="Id" column="Id" type="Int64" unsaved-value="0"><generator class="native" /></id>

...

</class>

The value of the 'oldClassName' attribute is the string as it appears in the old database, typically 'namespace.class, assembly'.

To summarize: if you rename a persistent class, and there is any chance the object has ever been used as DataItem value, parameter, variable, filter, or had its type mapped directly for any other reason: make sure to add a meta attribute with the old class alias.

NHibernate's lazy functionality is a good way of postpone retrieval of an object and therefore increase performance. However there are a few caveats one should be aware of:

- Your need a custom equals. If you compare a 'lazy' with the underlying 'real' object you want them to be equal. To implement this you need a custom Equals implementation. In DS you can use Unique as a base class. This implements a correct equals for you.

- If the object you want to load does update on changes of another object (propertychanged etc) you have a problem. For example : You lazily load a network coverage. Normally when a branch is removed from the network the coverage auto-removes the values for this branch. BUT when the coverage is lazy-loaded it does not get notified of the branch removal and does not update. When the coverage is unproxied it will still contain locations of the removed branch. In cases where there is no such relationship ,for example a timeseries of a lateral there is no problem and this function can be lazy-loaded.

In this post we will look at migrating from a flat class to a hierarchy of classes.

Given the following situation:

We need to map specific properties for specific subclasses, but the biggest challenge is how to tell NHibernate which subclass to instantiate on load. In the old situation to differentiate between a RiverWeir and a SimpleWeir, one had to check the WeirType (string) property.

In the past, WeirType could contain the following strings:

- simple_weir

- river_weir

- advanced_river_weir

We want NHibernate to use this property to decide which subclass to create, and we want to map both 'river_weir' and 'advanced_river_weir' to the RiverWeir class.

Let's first assume we don't have this 'advanced_river_weir'. In that case we can use the normal discriminator system of NHibernate (note: discriminator must come after Id!). We also map the specific properties while we're at it:

<class name="Weir">

<id name="Id">

<generator class="guid" />

</id>

<discriminator column="WeirType"/>

<property name="Name" />

<property name="CrestLevel" />

<property name="GateHeight" />

<subclass name="SimpleWeir" discriminator-value="simple_weir" >

<property name="DischargeCoefficient" formula="SimpleWeirDischargeCoefficient" />

</subclass>

<subclass name="RiverWeir" discriminator-value="river_weir" >

<property name="SubmergeReduction" formula="RiverWeirSubmergeReduction" />

</subclass>

</class>

Now lets look at the more complex situation with 'advanced_river_weir' included. Again, formula comes to the rescue, this time in 'discriminator'. This way you can influence the value of what NHibernate sees as the discriminator value. The possible discriminator values you return must match with the discriminator-value's you supply for each subclass:

<class name="Weir">

<id name="Id">

<generator class="guid" />

</id>

<discriminator formula="case when WeirType in ('river_weir', 'advanced_river_weir') then 'RiverWeir' else 'SimpleWeir' end"/>

<property name="Name" />

<property name="CrestLevel" />

<property name="GateHeight" />

<subclass name="SimpleWeir" discriminator-value="SimpleWeir" >

<property name="DischargeCoefficient" formula="SimpleWeirDischargeCoefficient" />

</subclass>

<subclass name="RiverWeir" discriminator-value="RiverWeir" >

<property name="SubmergeReduction" formula="RiverWeirSubmergeReduction" />

</subclass>

</class>

Note that I have changed the discriminator-value of both subclasses and also in the discriminator formula, to indicate you can choose any.

For source code: https://repos.deltares.nl/repos/delft-tools/trunk/shared/NHibernateBackwardsCompatibility

In this post I will look at what is probably a less common situation, but it boasts slightly more complex SQL formulas and a more entwined domain model.

Here is the situation we have:

We want to merge the classes Pump and PumpDefinition into one. The old database looks like this:

We should have no trouble maintaining the Pumps list in Network; nothing changed there. So we just need to retrieve the Pump properties DefinitionName and Capacity from another table: pump_definition.

Let's start with the basics migrating HBM, which is almost the same as the new HBM:

<class name="Network">

<id name="Id"> <generator class="guid" /> </id>

<bag name="Pumps" cascade="all-delete-orphan">

<key column="PumpId"/>

<one-to-many class="Pump"/>

</bag>

</class>

<class name="Pump">

<id name="Id">

<generator class="guid" />

</id>

<property name="Name" />

<property name="DefinitionName" ???? />

<property name="Capacity" ???? />

</class>

It's not finished yet, we need to do something about DefinitionName and Capacity. Let's start with the DefinitionName. Using the formula field and simple SQL we can retrieve it from the pump_definition table, we just need to match the Ids:

SELECT def.Name FROM pump_definition def WHERE def.Id = Definition

Note that 'Definition' here is the column (foreign key) in the old Pump table.

For Capacity we can do something quite similar, so the HBM needs to be adjusted to the following:

<property name="DefinitionName" formula="( SELECT def.Name FROM pump_definition def WHERE def.Id = Definition)"/>

<property name="Capacity" formula="( SELECT def.Capacity FROM pump_definition def WHERE def.Id = Definition)"/>

The resulting cleaned-up SQL looks like this:

SELECT pump.NetworkId, pump.Id, pump.Id, pump.Name, ( SELECT def.Name FROM pump_definition def WHERE def.Id = pump.Definition), ( SELECT def.Capacity FROM pump_definition def WHERE def.Id = pump.Definition) FROM Pump pump WHERE pump.NetworkId=...

This should have concluded the series on using hbms for backward compatibility, however I will do at least one more post, regarding class inheritance. For source code see first post in series.

This is part two in the series describing how to write a 'legacy'/'migrating' hbm for backward compatibility.

The refactoring in this post is the splitting of a class 'Bridge'. See the class diagram:

Originally there was one big Bridge class, but later it was decided to split it into a Bridge and a BridgeDefinition. Also the properties don't exactly match:

- SideArea: no longer exists

- Pillars -> renamed to NumPillars

- Color: should always be 'RED'

- IsOpen: new

Here are the old HBM (for reference) and the new HBM:

<class name="Bridge">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Color" />

<property name="HasRoad"/>

<property name="Width"/>

<property name="Height"/>

<property name="SideArea"/>

<property name="Type"/>

<property name="Pillars"/>

</class>

<class name="Bridge">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Color" />

<property name="HasRoad"/>

<property name="IsOpen"/>

<many-to-one name="Definition" cascade="all-delete-orphan"/>

</class>

<class name="BridgeDefinition">

<id name="Id">

<generator class="guid" />

</id>

<property name="Type"/>

<property name="Width"/>

<property name="Height"/>

<property name="NumPillars"/>

</class>

We need to tackle four property changes and the class split itself. Let's start with the property changes:

Property |

Change |

Solution |

|---|---|---|

SideArea |

No longer exists |

Do nothing! |

Pillars |

Renamed to NumPillars |

<property name="NumPillars" formula="Pillars"/> |

Color |

Should always be 'RED' when loading old data |

<property name="Color" formula="'RED'"/> |

IsOpen |

New property, should always be 'true' when loading old data |

<property name="IsOpen" formula="1"/> |

Finally we need to tackle how to split the class. Fortunately NHibernate has something called components, where it persists two classes into one table. We just tell NHibernate to threat the table as such a table, with BridgeDefinition being a 'component' of Bridge and NHibernate will load the single table into two entities, just like we want. The resulting HBM looks like this:

<class name="Bridge">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Color" formula="'RED'"/>

<property name="HasRoad"/>

<property name="IsOpen" formula="1"/>

<component name="Definition" class="BridgeDefinition">

<property name="Type"/>

<property name="Width"/>

<property name="Height"/>

<property name="NumPillars" formula="Pillars"/>

</component>

</class>

Note that the Id property of the BridgeDefinition component is not mapped. However when saving this class in the new session (with the new hbm's), it will receive an Id anyway.

The resulting SQL:

SELECT bridge.Id, bridge.Name, bridge.HasRoad, bridge.Type, bridge.Width, bridge.Height, 'RED', 1, bridge.Pillars FROM Bridge bridge WHERE bridge.Id=...

Note that the splitting into a component is handled by NHibernate in code and has no effect on the SQL.

Hopefully this post shows that what appears to be a more difficult refactoring, turns out to be pretty easily mapped. See previous post for SVN link to source code. Next up: class merge.

In the upcoming posts I will give three examples of how to implement backward compatibility using hbm mappings.

In this post I start with a simple refactoring: a 'Product' class for which a boolean property was renamed and, as a bonus, also negated. See the class diagram for an overview:

So, originally a product was marked as 'Available', but it was later decided it would be more logical to use a 'Discontinued' flag. This is not just a simple rename! We need to do something equivalent to 'Discontinued = !Available' when loading products from the old database. Specifically, we need to write a nhibernate HBM mapping file which reads the old database format into the new object model.

The old database format is as follows:

And you can imagine the original HBM with which this was saved would look something like this:

<?xml version="1.0" encoding="utf-8" ?>

<hibernate-mapping xmlns="urn:nhibernate-mapping-2.2" assembly="..." namespace="...">

<class name="Product">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Category" />

<property name="Available"/>

</class>

</hibernate-mapping>

The new HBM looks like this:

<?xml version="1.0" encoding="utf-8" ?>

<hibernate-mapping xmlns="urn:nhibernate-mapping-2.2" assembly="..." namespace="...">

<class name="Product">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Category" />

<property name="Discontinued"/>

</class>

</hibernate-mapping>

To migrate from the old database to the new object model, we must create another, special mapping. This mapping will only be used for loading, not for saving, so we can use some special features like embedding SQL queries into the HBM.

Let's start by writing the basics. We can map most properties 1-to-1, as you can see here:

<?xml version="1.0" encoding="utf-8" ?>

<hibernate-mapping xmlns="urn:nhibernate-mapping-2.2" assembly="..." namespace="...">

<class name="Product">

<id name="Id"> <generator class="guid" /> </id>

<property name="Name" />

<property name="Category" />

<property name="Discontinued" ????what to do here???? />

</class>

</hibernate-mapping>

Less trivial is how to retrieved the correct value for the 'Discontinued' property. We must keep in mind that we can only map to new properties, so we cannot have a property with name="Available" here. The 'Available' value is however still there in the database, so in this case we can solve it by using the 'formula' field inside a property tag, to insert SQL queries and logic to get values from the database.

Specifically, we can do the following:

<property name="Discontinued" formula="( Available = 0 )"/>

To understand why this works, it is important to understand how NHibernate processes these formulas. NHibernate inserts the formula as a subquery into the larger SQL. This is the cleaned-up version of what NHibernate generates:

SELECT product.Id, product.Name, product.Category, ( product.Available = 0 ) FROM Product product WHERE product.Id=...

Since 'Available' is a boolean value stored as integer, we do a '=0' compare to inverse the boolean value. Also notice that NHibernate will insert 'product.' in front of 'Available', since it recognizes it as a column in the database.

We could also have inserted an entire SQL query, for example:

<property name="Discontinued" formula="SELECT p.Available = 0 FROM Product p WHERE p.Id = Id"/>

The resulting (cleaned-up) SQL is as you would expect:

SELECT product.Id, product.Name, product.Category, ( SELECT p.Available = 0 FROM Product p WHERE p.Id = product.Id ) FROM Product product WHERE product.Id=...

The result is equivalent (although quite a bit longer), but hopefully this shows how powerful subqueries can be. In the formula field you could also retrieve values from entirely different tables, combine columns, do simple math, or set default values.

In upcoming posts I will show examples of backward compatibility for slightly more complex refactorings; class merging and class splitting. Most of that will be based on retrieving values with the formula field, but also using some other techniques. The source code for these three (and possibly more) testcases can be found on SVN: https://repos.deltares.nl/repos/delft-tools/trunk/shared/NHibernateBackwardsCompatibility

This post describes a design for implementing backwards-compatibility in deltashell.

Backwards-compatibility : The ability to open project files written by an older version of the application in the current application.

Global idea / setup

The idea is to use custom hbm.xml mapping to convert older project databases to the current object model. For example a plugin has made a release 1.0 which should be supported. Now if the mappings change during further development something has to be done to read the old projects. We need specific mappings to read the old files next to the current mappings. The situation would be as follows:

So the Person.hbm.1.0.xml describes the mapping of files written with 1.0 to the current version of the person. This mapping wil change as the Person evolves and we still want to support read old project files. NHibernate has a rich feature-set to get the data from the old schema to the new objects such as formulas, custom sql, usertypes etc. Another advantage of this approach is that backwards compatibility is solved using stand NHibernate technology and not DS specific stuff.

What happens when an old project gets openened?

If Deltashell opens an old project it creates a session for that version of the DB. After that all objects are migrated to a new session with the current configuration. Then when the project is saved the database is in a new format.

How does DS know which mapping to use?

Each project database contains a table with plugin version information with which the db was written. The version is the same as used for the plugin assembly. This could look like this:

Component |

Release Version |

|---|---|

Framework |

1.0.0 |

Plugin1 |

0.6.0 |

Plugin2 |

1.1.0 |

Now development continues and breaking changes occur. This could look like this :

When the project is saved the following information is entered in the versions table

Component |

Version |

|---|---|

Framework |

1.2 |

Plugin1 |

0.6.1 |

Plugin2 |

1.1 |

Now when that project is loaded the information is this:

Component |

Version |

|---|---|

Framework |

1.3 |

Plugin1 |

0.6.1 |

Plugin2 |

1.2 |

Both framework and plugin2 have new versions. DeltaShell has to find to 1.2 to 1.3 mappings for framework and 1.1 to 1.2 mappings for plugin2. Since not all entity mappings change the logic is as follows.

Per mapping file

This section and the implemented logic has been updated June 2012

The rule per mapping file is simple:

- Take the first mapping file with a version equal or more recent than the persisted version.

Revision / build version numbers are ignored; 3.0.5 is treated as 3.0. The idea behind this is that file format changes should only occur in major or minor versions.

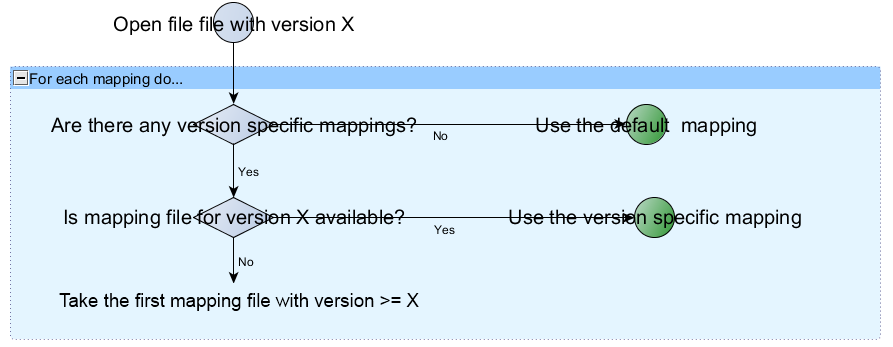

More elaborate, in a chart:

So how does this affect plugin development?

If you release a version which you want to support you should include a test reading a project file of that version. So when you release your plugin 1.5 you should write a test called ReadVersion1_5 in your plugin that reads a project that covers all possible mappings you have in your plugin. You should check you get everything back from the project as you expect it. The dsproj file is checked into svn and the test should work as nothing changed yet. All is well....

After a while you want to refactor your class A. You also change the mapping A.hbm.xml. But the test ReadVersion1_5 you wrote on release now fails because reading the old db with the new a.hbm.xml does not work! What to do? You can chose not to refactor A and do something else OR you could add a A.1.5.hbm.xml mapping that converts the old db to the new object. Once you check in that new mapping file *ReadVersion1.5 should use that specific file (instead of A.hbm.xml) and the test should pass again.

So for a plugin it boils down to 4 points:

1 Write a ignored (not run on BS) test that can create a dsproj file with all persistent objects you have in your plugin. You create a 'representative' project here. Update this test as your plugin evolves and add the category [BackwardCompatibility]

2 If you have a release with file-format changes run test 1) and copy the result to the release version. Write a test that reads in that project file and add the category [BackwardCompatibility]

For example if you release 1.1 you should run the test of 1) and copy the result to project1_1.dsproj + data. Check in this project and write a test ReadVersion1_1 that reads this project. You will never change this checked in project again.

3 If the test of 2) breaks add version specific mappings until the test passes again.

4 If you longer support a version delete the test and the mapping you created for it.

Delete logic

This is a WorkInProgress description of the NHProjectRepository logic in DS.

NHProjectRepository uses a custom method te determine which objects can be deleted from the database. Unfortunately NHibernate can not do this because NHibernate cascades are evaluated very locally. See for example http://fabiomaulo.blogspot.com/2009/09/nhibernate-tree-re-parenting.html Basically NHibernate can delete object B if object A is deleted but it cannot check if object B is in use elsewhere.

The solution:

S - P = O

SessionObjects minus ProjectObjects are Orphans. So everything that is in session but not in project can be deleted. Now our save logic look liks this

1) Determine session objects using NHibernate session's entityentries

2) Determine project objects using EntityWalker (more about this later)

3) Determine orphaned objects by substracting 1 -2 and delete them from the session

4) Flush the session.

EntityWalker and EntityMetaModel

EntityWalker is a class that based on a EntityMetaModel can traverse a object tree. EntityMetaModel describes the relationship between objects and the strength of the relationship _(composition vs aggregation). The EntityMetaModel can be determined by looking at NHibernate's configuration and this is done by the NHibernateMetaModelProvider. Using this EMM the EntityWalker can create a set of objects which are reachable from the project root. The EntityWalker uses only Composite relationships to traverse the object tree. So something that is only reachable by aggregate relationships is not considered in project (and will be considered an orphan if is in session).

NHibernateMetaModelProvider

This class is responsible for creating a EntityMetaModel based on a NHibernate configuration. It uses NHibernate's cascade styles to determine the strength of the relationship. Here the rule of thumb is that when a cascade includes a delete the relation is composition and otherwise it is a mere aggregate relationship. So

- All -> Composite

- All-Delete-Orphan -> Composite

- Others -> Aggregation

Customizing NHibernate deletes with DeltaShellDeleteEventListener

NHibernate still does cascade on relationships and this can be a problem. For example when a object is reparented. In the sample below object A was a part-of (composition) D1 but it moved to D2. All relationships below are composite. Now if the cascade of the mapping between D1 and A is all NHibernate will delete A because D1 is deleted. This is obviously not what we want because D2 uses A now. This will result in a 'deleted object will be resaved by cascade' exception. To prevent this we need to intercept Nhibernate's deletes and verify that the object really should be deleted. This can be done by checking if the object that NH wants to delete is a orphan. This is done replacing Nhibernate's DefaultDeleteListener with our own and overriding the OnDelete method. Here a check is done with the current orphans list. If the entity-to-delete is an orphan the delete is processed further. Otherwise it is cancelled. Note: Using the IPreDeleteEventListener did not work because cascade and handled first and these eventhandlers are fired very late in the pipe-line. Hence the override on this class.

Further reading

Fowler about aggregate vs composition :http://martinfowler.com/bliki/AggregationAndComposition.html

NHibernate cascades : http://ayende.com/blog/1890/nhibernate-cascades-the-different-between-all-all-delete-orphans-and-save-update